A photo of Lobna Hemid is displayed on a friend’s smartphone in Madrid on March 29, 2024. She was killed by her husband in 2022. — The New York Times

MADRID: In a small apartment outside Madrid on Jan 11, 2022, an argument over household chores turned violent when Lobna Hemid’s husband smashed a wooden shoe rack and used one of the broken pieces to beat her. Her screams were heard by neighbours. Their four children, ages six to 12, were also home.

Hemid’s husband of more than a decade, Bouthaer el Banaisati, regularly punched and kicked her, she later told police. He also called her “disgusting” and “worthless,” according to the police report.

Before Hemid left the station that night, police had to determine if she was in danger of being attacked again and needed support. A police officer clicked through 35 yes or no questions – Was a weapon used? Were there economic problems? Has the aggressor shown controlling behaviours? – to feed into an algorithm called VioGén that would help generate an answer.

VioGén produced a score:

Low Risk

Lobna Hemid

2022 Madrid

The police accepted the software’s judgment and Hemid went home with no further protection. El Banaisati, who was imprisoned that night, was released the next day. Seven weeks later, he fatally stabbed Hemid several times in the chest and abdomen before killing himself. She was 32.

Spain has become dependent on an algorithm to combat gender violence, with the software so woven into law enforcement that it is hard to know where its recommendations end and human decision-making begins. At its best, the system has helped police protect vulnerable women and, overall, has reduced the number of repeat attacks in domestic violence cases. But the reliance on VioGén has also resulted in victims, whose risk levels are miscalculated, getting attacked again – sometimes leading to fatal consequences.

Spain now has 92,000 active cases of gender violence victims who were evaluated by VioGén, with most of them – 83% – classified as facing little risk of being hurt by their abuser again. Yet about 8% of women who the algorithm found to be at negligible risk and 14% at low risk have reported being harmed again, according to Spain’s Interior Ministry, which oversees the system.

At least 247 women have also been killed by their current or former partner since 2007 after being assessed by VioGén, according to government figures. While that is a tiny fraction of gender violence cases, it points to the algorithm’s flaws. The New York Times found that in a judicial review of 98 of those homicides, 55 of the slain women were scored by VioGén as negligible or low risk for repeat abuse.

Spanish police are trained to overrule VioGén’s recommendations depending on the evidence, but accept the risk scores about 95% of the time, officials said. Judges can also use the results when considering requests for restraining orders and other protective measures.

“Women are falling through the cracks,” said Susana Pavlou, director of the Mediterranean Institute of Gender Studies, who co-authored a European Union report about VioGén and other police efforts to fight violence against women. The algorithm “kind of absolves the police of any responsibility of assessing the situation and what the victim may need.”

A global trend

Spain exemplifies how governments are turning to algorithms to make societal decisions, a global trend that is expected to grow with the rise of artificial intelligence. In the United States, algorithms help determine prison sentences, set police patrols and identify children at risk of abuse. In the Netherlands and Britain, authorities have experimented with algorithms to predict who may become criminals and to identify people who may be committing welfare fraud.

Few of the programs have such life or death consequences as VioGén. But victims interviewed by the Times rarely knew about the role the algorithm played in their cases. The government also has not released comprehensive data about the system’s effectiveness and has refused to make the algorithm available for outside audit.

VioGén was created to be an unbiased tool to aid police with limited resources identify and protect women most at risk of being assaulted again. The technology was meant to create efficiencies by helping police prioritise the most urgent cases, while focusing less on those calculated by the algorithm as lower risk. Victims classified as higher risk get more protection, including regular patrols by their home, access to a shelter and police monitoring of their abuser’s movements. Those with lower scores get less support.

In a statement, the Interior Ministry defended VioGén and said the government was the “first to carry out self-criticism” when mistakes occur. It said homicide was so rare that it was difficult to accurately predict, but added it was an “incontestable fact” that VioGén has helped reduce violence against women.

Since 2007, about 0.03% of Spain’s 814,000 reported victims of gender violence have been killed after being assessed by VioGén, the ministry said. During that time, repeat attacks have fallen to about 15% of all gender violence cases from 40%, according to government figures.

“If it weren’t for this, we would have more homicides and gender-based violence,” said Juan José López Ossorio, a psychologist who helped create VioGén and works for the Interior Ministry.

Yet victims and their families are grappling with the consequences when VioGén gets it wrong.

“Technology is fine, but sometimes it’s not and then it’s fatal,” said Jesús Melguizo, Hemid’s brother-in-law, who is a guardian for two of her children. “The computer has no heart.”

‘Effective but not perfect’

VioGén started with a question: Can police predict an assault before it happens?

After Spain passed a law in 2004 to address violence against women, the government assembled experts in statistics, psychology and other fields to find an answer. Their goal was to create a statistical model to identify women most at risk of abuse and to outline a standardised response to protect them.

“It would be a new guide for risk assessment in gender violence,” said Antonio Pueyo, a psychology professor at the University of Barcelona who later joined the effort.

The team took a similar approach to how insurance companies and banks predict the likelihood of future events, such as house fires or currency swings. They studied national crime statistics, police records and the work of researchers in Britain and Canada to find indicators that appeared to correlate with gender violence. Substance abuse, job loss and economic uncertainty were high on the list.

Then they came up with a questionnaire for victims so their answers could be compared with historical data. Police would fill in the answers after interviewing a victim, reviewing documentary evidence, speaking with witnesses and studying other information from government agencies. Answers to certain questions carried more weight than others, like if an abuser displayed suicidal tendencies or showed signs of jealousy.

These are some of the questions answered by women:

6. In the last six months, has there been an escalation of aggression or threats?

Yes No N/A

26. Has the aggressor demonstrated addictive behaviors or substance abuse?

Yes No N/A

34. In the last six months, has the victim expressed to the aggressor her intention to sever their relationship?

Yes No N/A

The system produced a score for each victim: negligible risk, low risk, medium risk, high risk or extreme risk. A higher score would result in police patrols and the tracking of an aggressor’s movements. In extreme cases, police would assign 24-hour surveillance. Those with lower scores would receive fewer resources, mainly follow-up calls.

Predictive algorithms to address domestic violence have been used in parts of Britain, Canada, Germany and the United States, but not on such a national scale. In Spain, the Interior Ministry introduced VioGén everywhere but in the Catalonia region and Basque Country.

Law enforcement initially greeted the algorithm with scepticism, police and government officials told the Times, but it soon became a part of everyday police business.

Before VioGén, investigations were “based on the experience of the policeman,” said Pueyo, who remains affiliated with the program. “Now this is organised and guided by VioGén.”

VioGén is a source of impartial information, he said. If a woman attacked late at night was seen by a young police officer with little experience, VioGén could help detect the risk of future violence.

“It’s more efficient,” Pueyo said.

Over the years, VioGén has been refined and updated, including with metrics that are believed to better predict homicide. Police have also been required to conduct a follow-up risk assessment within 90 days of an attack.

But Spain’s faith in the system has surprised some experts. Juanjo Medina, a senior researcher at the University of Seville who has studied VioGén, said the system’s effectiveness remains unclear.

“We’re not good at forecasting the weather, let alone human behaviour,” he said.

Francisco Javier Curto, a commander for the military police in Seville, said VioGén helps his teams prioritise, but requires close oversight. About 20 new cases of gender violence arrive every day, each requiring investigation. Providing police protection for every victim would be impossible given staff sizes and budgets.

“The system is effective but not perfect,” he said, adding that VioGén is “the best system that exists in the world right now”.

José Iniesta, a civil guard in Alicante, a southeastern port city, said not enough police officers are trained to keep up with growing case loads. A leader in the United Association of Civil Guards, a union representing officers in rural areas, he said that outside of big cities, the police often must choose between addressing violence against women or other crimes.

Sindicato Unificado de Policía, a union that represents national police officers, said even the most effective technology cannot make up for a lack of trained experts. In some places, a police officer is assigned to work with more than 100 victims.

“Agents in many provinces are overwhelmed,” the union said in a statement.

When attacks happen again

The women who have been killed after being assessed by VioGén can be found across Spain.

One was Stefany González Escarraman, a 26-year-old living near Seville. In 2016, she went to the police after her husband punched her in the face and choked her. He threw objects at her, including a kitchen ladle that hit their three-year-old child. After police interviewed Escarraman for about five hours, VioGén determined she had a “negligible risk” of being abused again:

Negligible Risk

Stefany González Escarraman

2016 Seville

The next day, Escarraman, who had a swollen black eye, went to court for a restraining order against her husband. Judges can serve as a check on the VioGén system, with the ability to intervene in cases and provide protective measures. In Escarraman’s case, the judge denied a restraining order, citing VioGén’s risk score and her husband’s lack of criminal history.

About a month later, Escarraman was stabbed by her husband multiple times in the heart in front of their children. In 2020, her family won a verdict against the state for failing to adequately measure the level of risk and provide sufficient protection.

“If she had been given the help, maybe she would be alive,” said Williams Escarraman, her brother.

In 2021, Eva Jaular, who lived in Liaño in northern Spain, was slain by her former boyfriend after being classified as low risk by VioGén. He also killed their 11-month-old daughter. Six weeks earlier, he had jabbed a knife into a couch cushion next to where Jaular sat and said, “look how well it sticks”, according to a police report.

Low Risk

Eva Jaular

2021 Liaño

Since 2007, 247 of the 990 women killed in Spain by a current or former partner were previously scored by VioGén, according to the Interior Ministry. The other victims had not been previously reported to the police, so were not in the system. The ministry declined to disclose the VioGén risk scores of the 247 who were killed.

The Times instead analysed reports from a Spanish judicial agency, released almost every year from 2010 to 2022, which included information about the risk scores of 98 women who were later killed. Of those, 55 had been classified as negligible risk or low risk.

In a statement, the Interior Ministry said that analysing the risk scores of homicide victims doesn’t provide an accurate picture of VioGén’s effectiveness because some homicides happened more than a year after the first assessment, while others were committed by a different partner.

Why the algorithm incorrectly classifies some women varies and isn’t always clear, but one reason may be the poor quality of information fed into the system. VioGén is ideally suited for cases when a woman, in the moments after being attacked, can provide complete information to an experienced police officer who has time to fully investigate the incident.

That does not always happen. Fear, shame, economic dependency, immigration status and other factors can lead a victim to withhold information. Police are also often squeezed for time and may not fully investigate.

“If we already enter erroneous information into the system, how can we expect the system to give us a good result?” said Elisabeth, a victim who now works as a gender violence lawyer. She spoke on the condition her full name not be used, for fear of retaliation by her former partner.

Luz, a woman from a village in southern Spain, said she was repeatedly labelled low risk after attacks by her partner because she was afraid and ashamed to provide complete information to the police, some of whom she knew personally. She got her risk score increased to extreme only after working with a lawyer specialising in gender violence cases, leading to round-the-clock police protection.

Extreme Risk

Luz

2019 Southern Spain

“We women keep a lot of things silent not because we want to lie but out of fear,” said Luz, who spoke on the condition her full name not be used for fear of retaliation by her attacker, who was imprisoned. “VioGén would be good if there were qualified people who had all the necessary tools to carry it out.”

Victim groups said that psychologists or other trained specialists should lead the questioning of victims rather than the police. Some have urged the government to mandate that victims be allowed to be accompanied by somebody they trust to help ensure full information is given to authorities, something that is now not allowed in all areas.

“It’s not easy to report a person you’ve loved,” said María, a victim from Granada in southern Spain, who was labelled medium risk after her partner attacked her with a dumbbell. She asked that her full name not be published for fear of retaliation by him.

Medium Risk

Maria

2023 Granada

Ujué Agudo, a Spanish researcher studying the influence of AI on human decisions, said technology has a role in solving societal problems. But it could reduce the responsibility of humans to approving the work of a machine, rather than conducting the necessary work themselves.

“If the system succeeds, it’s a success of the system. If the system fails, it’s a human error that they aren’t monitoring properly,” said Agudo, a co-director of Bikolabs, a Spanish civil society group. A better approach, she said, was for people “to say what their decision is before seeing what the AI thinks”.

Spanish officials are exploring incorporating AI into VioGén so it can pull data from different sources and learn more on its own. Ossorio, a creator of VioGén who works for the Interior Ministry, said the tools can be applied to other areas, including workplace harassment and hate crimes.

The systems will never be perfect, he said, but neither is human judgment. “Whatever we do, we always fail,” he said. “It’s unsolvable problems.”

Last month, the Spanish government called an emergency meeting after three women were killed by former partners within a 24-hour span. One victim, a 30-year-old from central Spain, had been classified by VioGén as low risk.

At a news conference, Interior Minister Fernando Grande-Marlaska said he still had “absolute confidence” in the system.

‘Always cheerful’

Hemid, who was killed outside Madrid in 2022, was born in rural Morocco. She was 14 when she was introduced at a family wedding to el Banaisati, who was 10 years older than her. She was 17 when they married. They later moved to Spain so he could pursue steadier work.

Hemid was outgoing and gregarious, often seen racing to get her children to school on time, friends said. She learned to speak Spanish and sometimes joined children playing soccer in the park.

“She was always cheerful,” said Amelia Franas, a friend whose children went to the same school as Hemid’s children.

Few knew that abuse was a fixture of Hemid’s marriage. She spoke little about her home life, friends said, and never called the police or reported el Banaisati before the January 2022 incident.

VioGén is intended to identify danger signs that humans may overlook, but in Hemid’s case, it appears that police missed some clues. Her neighbours told the Times they were not interviewed, nor were administrators at her children’s school, who said they had seen signs of trouble.

Family members said el Banaisati had a life-threatening form of cancer that made him behave erratically. Many blamed underlying discrimination in Spain’s criminal system that overlooks violence against immigrant women.

Police haven’t released a copy of the assessment that produced Hemid’s low risk score from VioGén. A copy of a separate police report shared with the Times noted that Hemid was tired during questioning and wanted to end the interview to get home.

A few days after the January 2022 attack, Hemid won a restraining order against her husband. But el Banaisati largely ignored the order, family and friends said. He moved into an apartment less than 500 meters from where Hemid lived and continued threatening her.

Melguizo, her brother-in-law, said he appealed to Hemid’s assigned public lawyer for help, but was told the police “won’t do anything, it has a low risk score”.

The day after Hemid was stabbed to death, she had a court date scheduled to officially file for divorce. – The New York Times

A photo of Lobna Hemid is displayed on a friend’s smartphone in Madrid on March 29, 2024. She was killed by her husband in 2022. — The New York Times

A photo of Lobna Hemid is displayed on a friend’s smartphone in Madrid on March 29, 2024. She was killed by her husband in 2022. (Ana Maria Arevalo Gosen/The New York Times)

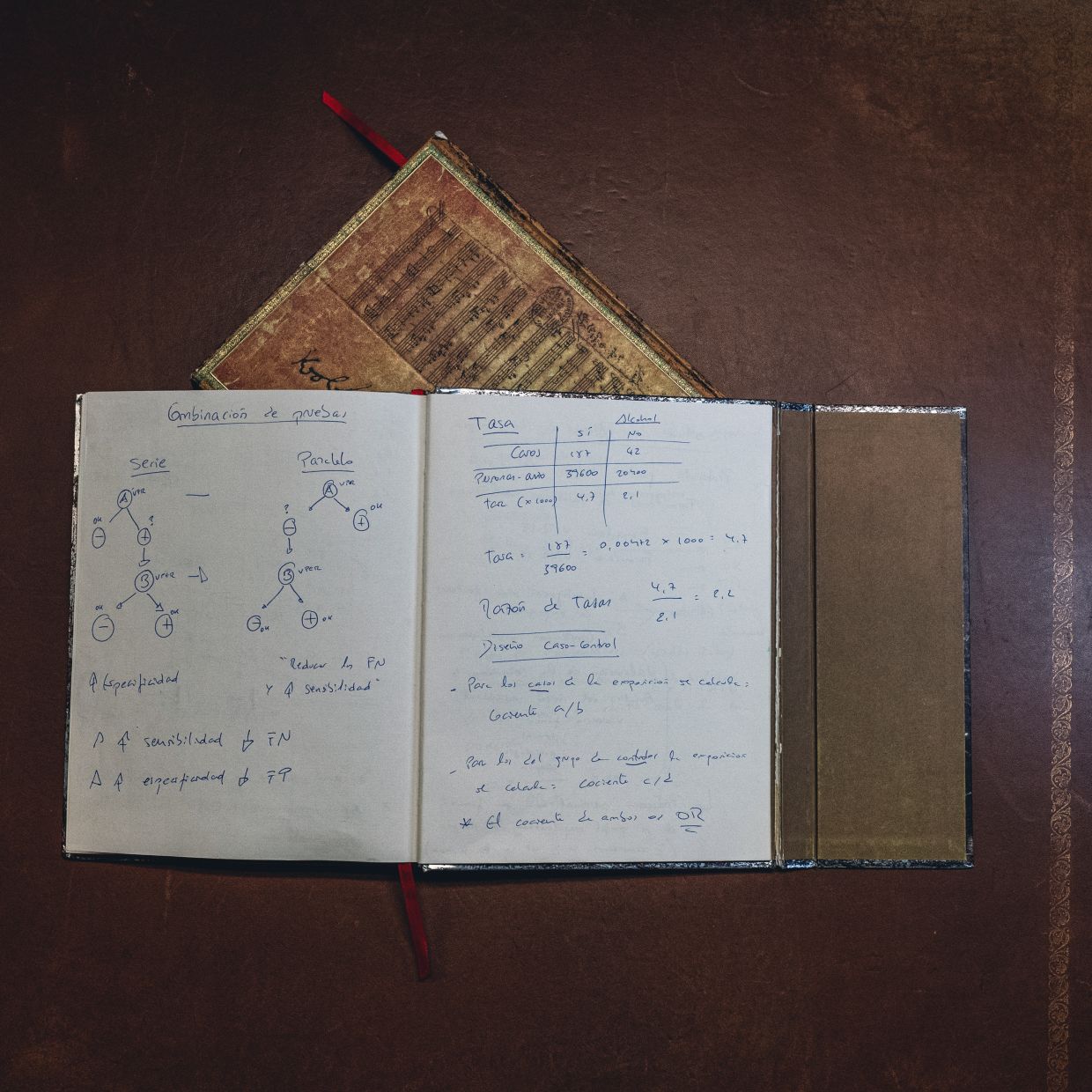

A notebook is displayed in the office of Juan José López Ossorio, a government official who helped create the VioGén system, in Madrid on April 30, 2024. It is open to show some initial designs and research strategies for what became VioGén, including a decision tree and calibration techniques for predicting intimate partner homicides. — The New York Times

Some initial designs and research strategies for what became VioGŽn, including a decision tree and calibration techniques for predicting intimate partner homicides. (Ana Maria Arevalo Gosen/The New York Times)

Francisco Javier Curto, a commander for the military police in Seville who oversees gender violence incidents in the province, in his office in the Spanish city on March 30, 2024. VioGén is 'the best system that exists in the world right now', he says. — The New York Times

Francisco Javier Curto, a commander for the military police in Seville who oversees gender violence incidents in the province. VioGŽn is Òthe best system that exists in the world right now,Ó he says. (Ana Maria Arevalo Gosen/The New York Times)

Juan José López Ossorio, a government official who helped create the VioGén system, in his office in Madrid on April 30, 2024. Spain has become reliant on the VioGén algorithm to score how likely a domestic violence victim is to be abused again and what protection to provide — sometimes leading to fatal consequences. — The New York Times

Juan José López Ossorio, a government official who helped create the VioGén system, in his office in Madrid on April 30, 2024. (Ana Maria Arevalo Gosen/The New York Times)

A memorial bouquet of roses and eucalyptus adorns a lamppost at the entrance to the street where Lobna Hemid lived in Madrid on March 29, 2024. Hemid was killed by her husband in 2022. — The New York Times

A memorial bouquet of roses and eucalyptus adorns a lamppost at the entrance to the street where Lobna Hemid lived in Madrid. (Ana Maria Arevalo Gosen/The New York Times)